Why it’s a mistake to ask chatbots about their mistakes

The randomness inherent in AI text generation compounds this problem. Even with identical prompts, an AI model might give slightly different responses about its own capabilities each time you ask.

Other layers also shape AI responses

Even if a language model somehow had perfect knowledge of its own workings, other layers of AI chatbot applications might be completely opaque. For example, modern AI assistants like ChatGPT aren’t single models but orchestrated systems of multiple AI models working together, each largely “unaware” of the others’ existence or capabilities. For instance, OpenAI uses separate moderation layer models whose operations are completely separate from the underlying language models generating the base text.

When you ask ChatGPT about its capabilities, the language model generating the response has little knowledge of what the moderation layer might block, what tools might be available in the broader system (aside from what OpenAI told it in a system prompt), or exactly what post-processing will occur. It’s like asking one department in a company about the capabilities of another department with a completely different set of internal rules.

Perhaps most importantly, users are always directing the AI’s output through their prompts, even when they don’t realize it. When Lemkin asked Replit whether rollbacks were possible after a database deletion, his concerned framing likely prompted a response that matched that concern—generating an explanation for why recovery might be impossible rather than accurately assessing actual system capabilities.

This creates a feedback loop where worried users asking “Did you just destroy everything?” are more likely to receive responses confirming their fears, not because the AI system has assessed the situation, but because it’s generating text that fits the emotional context of the prompt.

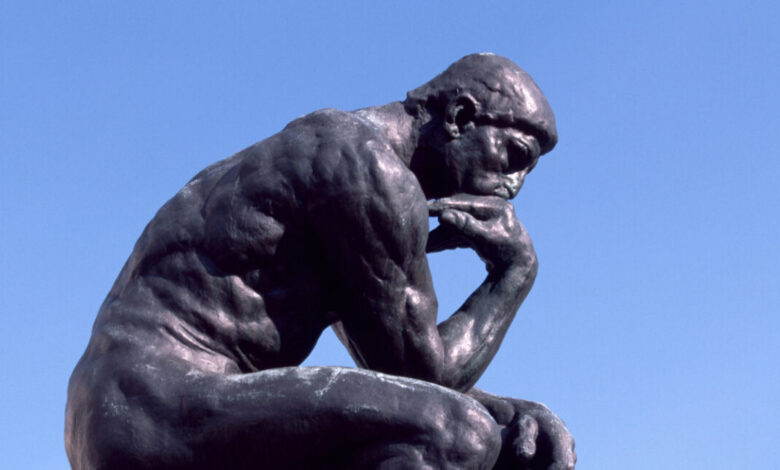

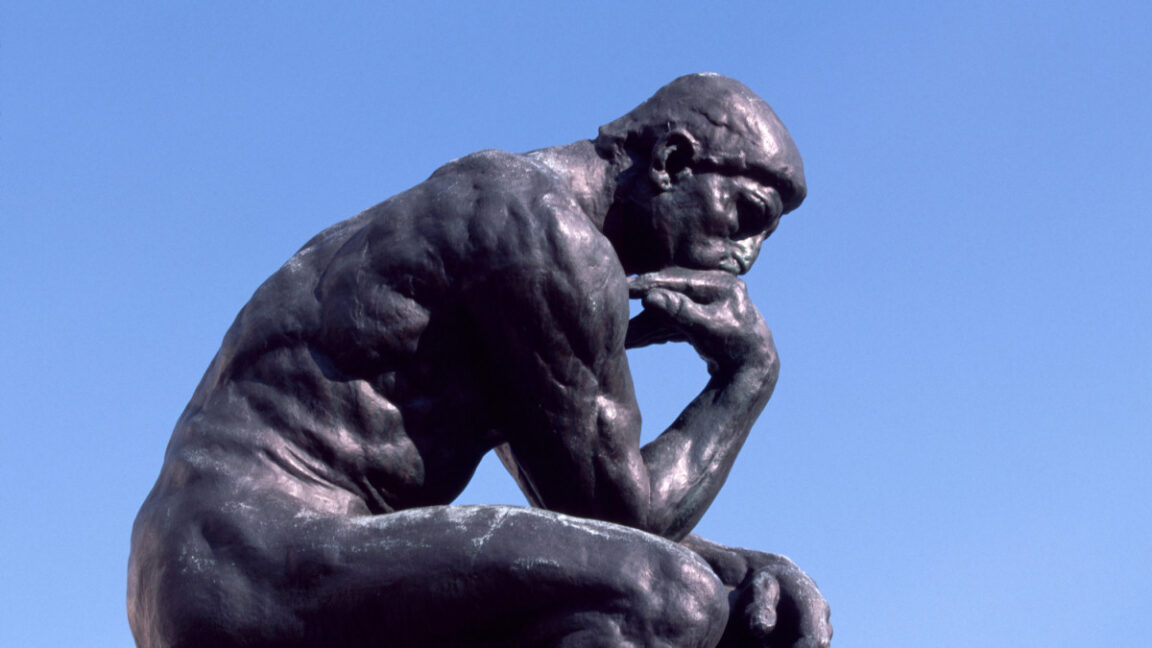

A lifetime of hearing humans explain their actions and thought processes has led us to believe that these kinds of written explanations must have some level of self-knowledge behind them. That’s just not true with LLMs that are merely mimicking those kinds of text patterns to guess at their own capabilities and flaws.